Orbital Compute After the Hype

A concept rendering of an orbital compute megastructure over Earth, illustrating the scale and exposure that large space-based data center proposals would impose.

By Deconstruct.com

Orbital compute has moved out of the science-fiction corner and into formal filings, agency studies, company roadmaps, and prototype plans. SpaceX has asked the Federal Communications Commission for authority to deploy an “Orbital Datacenter” system of up to one million satellites between 500 and 2,000 kilometers. Google’s Project Suncatcher describes a research program built around solar-powered satellites carrying Tensor Processing Units, with two prototype spacecraft planned for early 2027. ESA frames space-based datacenters through a different lens: data is born in orbit, downlink remains scarce, and processing closer to the sensor can reduce delay and bandwidth demand.[1][2][3]

Those three examples sound related. They are. They also describe three different things. One is mission compute for data created in space. Another is station-hosted or sovereign infrastructure for clients already operating above Earth. The third is a far larger claim: that terrestrial pressure on power, water, land, and permitting will push a meaningful share of Earth’s cloud and AI workloads into orbit. The public discussion keeps sliding across those categories. That slide obscures important differences, and each claim has to be judged on its own terms.

Some forms of orbital compute already make sense. Processing data near the sensor can pay for itself when the data is created in orbit and every raw bit does not need to be pushed to Earth. Hosted nodes on existing stations or sovereign platforms have a more legible customer and operating model than a public cloud region in free flight. A smaller class of resilience-oriented storage functions also fits. The broader claim, that terrestrial cloud and AI workloads will migrate to orbit in meaningful volume, still fails under current conditions. The constraints are concrete: power generation, eclipse losses, storage mass, radiator area, launch mass, replacement cadence, gateway licensing, spectrum coordination, and debris growth. Solar power is available. Radiative cooling works. At system scale, each arrives with a bill.

Three claims, three evidence standards

The first claim is the strongest and the easiest to defend. Some workloads are born in space and lose value when every raw bit has to be pushed to Earth before any filtering or inference happens. ESA describes this plainly: one of the main challenges of current space activity is downloading data from space to Earth, and processing closer to the source can reduce that burden.[3] This is orbital compute as mission infrastructure. Earth observation, wildfire detection, maritime tracking, change detection, or anomaly triage fit this category. The compute earns its place because it turns raw orbital data into a smaller, faster, more useful product before the downlink bill arrives.

The second claim is about compute hosted on stations for customers already operating above Earth. Axiom Space and SpaceBilt are building orbital datacenter nodes on the International Space Station and describe them as optically connected infrastructure for satellites, spacecraft, astronauts, researchers, and security-oriented clients in low Earth orbit, or LEO.[4] LEO means roughly 300 to 2,000 kilometers above Earth. This category has a different logic from consumer cloud or public AI infrastructure. It lives closer to a hosted-service model: existing platform, existing power and thermal support, clients already operating in orbit, plus strong interest in controlled data handling and Earth-independent continuity.

The third claim is the one attracting most of the attention. SpaceX says orbital datacenters are “the most efficient way” to meet accelerating AI compute demand and filed for a constellation that could scale toward one million satellites.[1] Google’s Suncatcher preprint says it is working backward from a future in which most AI computation happens in space.[2] Those are serious public claims, backed by real organizations and real engineering work. They still fall far short of proof that broad terrestrial migration closes on physics, operations, or cost.

Public evidence in this area comes in layers, and the layers should not be confused. A deployed node on ISS shows that useful compute can survive in orbit under hosted conditions. A peer-reviewed storage proposal shows that a specific resilience use case is coherent enough to model seriously. A filing for a giant constellation shows something else: intent, ambition, and a willingness to ask for the regulatory room to try. Those are all worth taking seriously. They do not prove the same thing.[1][4][5]

Energy first, heat second, then the rest of the architecture

Most arguments for orbital compute begin from the same appealing facts: the Sun is bright, space has no atmosphere, and terrestrial power queues are painful. Those statements are all true. They still leave out the two variables that dominate the design. The first is usable sunlight fraction over the full orbit. The second is heat rejection once electrical power turns into compute.

A workable first-pass power equation is simple:

P_avg ≈ I_sun × η_sys × f_sun × A_array

Where P_avg is orbit-averaged electrical power, I_sun is the solar constant near Earth, η_sys is system-level electrical yield after cell efficiency plus packaging and conversion losses, f_sun is the fraction of the orbit spent in sunlight, and A_array is deployed solar array area.[6]

Near Earth, I_sun is about 1,361 watts per square meter. That part barely changes across LEO. The real swing comes from f_sun. Generic LEO often spends only around 55 to 65 percent of each orbit in sunlight. A standard sun-synchronous orbit, or SSO, often lands closer to 70 to 85 percent over a year. An SSO is a near-polar, usually retrograde LEO whose orbital plane precesses at nearly the same rate Earth moves around the Sun, so the satellite crosses a given latitude at roughly the same local solar time on each pass. In practice that requirement ties altitude and inclination together fairly tightly, which is why many Earth-observation systems cluster in similar bands, often around 600 to 800 kilometers and about 98 degrees inclination. A dawn-dusk sun-synchronous orbit, sometimes called a terminator orbit, is a more constrained subset of SSO. It keeps that solar crossing time near sunrise or sunset rather than merely keeping it fixed.[9] ESA describes this directly: dawn-dusk SSO is attractive because the satellite can ride a constant sunrise or sunset and avoid Earth shadow for much of the year.[9]

That geometry does real economic work. Suppose a compute node needs 39 kilowatts of continuous facility power, a scale large enough to hold a serious compute payload and still small enough to think about as a single spacecraft. Use η_sys in the 0.25 to 0.30 range after accounting for cell efficiency, packaging, and conversion overhead. In a favorable dawn-dusk SSO with f_sun ≈ 0.98, the required array area comes out around 115 to 150 square meters:

A_array ≈ 39,000 / (1,361 × 0.25–0.30 × 0.98) ≈ 115–150 m²

Now rerun the same node in ordinary LEO with f_sun ≈ 0.60. Array area jumps toward roughly 190 to 245 square meters, and the design also inherits a large storage bill for eclipse ride-through. The storage equation is as blunt as the array equation:

E_storage ≈ P_load × t_eclipse / η_rt

For a 39 kilowatt node, a 30-minute eclipse, and 90 percent round-trip efficiency, the battery requirement lands around 1.3 megawatt-hours per node. A 160-node constellation would then carry on the order of 200 megawatt-hours of storage just to preserve continuity through eclipse. The optimistic orbital-power story therefore leans heavily on favorable orbit geometry. The terrestrial case keeps improving while the orbital case keeps asking for stacked miracles.[6][9]

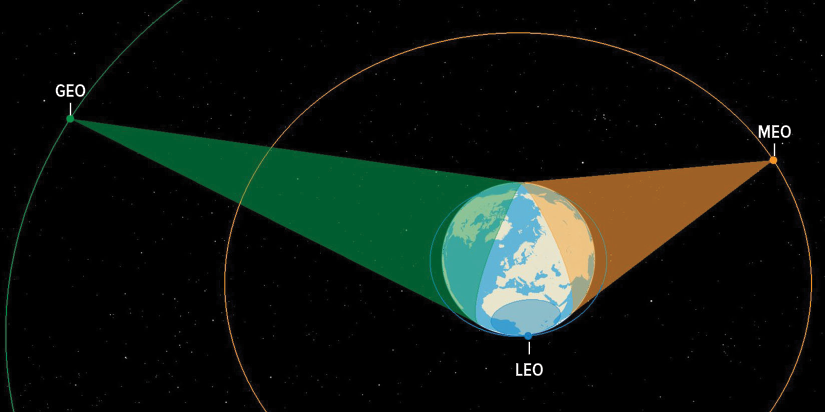

This illustration represents a three-dimensional depiction of the viewing area (field of regard) of satellites in LEO (1,000 km), MEO (18,000 km), and GEO (35,786 km). The coverage area represented here takes into account the Earth’s geometry but not other variables, such as any sensor-related limitations on viewing angle.

Source: Congressional Budget Office/Large Constellations of Low-Altitude Satellites: A Primer

There is a second consequence beyond power continuity. People talk about orbit as though it were an unlimited expanse. The power model says otherwise. Once a compute architecture depends on near-continuous sunlight, the useful part of low Earth orbit shrinks fast. The favored regime is not ‘space’ in general. It compresses to a set of sun-synchronous bands, especially dawn-dusk geometries, where eclipse burden stays low enough to keep storage and thermal penalties from running away. SSO does not concentrate spacecraft into one fixed geographic patch over Earth. Earth still rotates underneath the orbit, so different SSOs sample many longitudes and can differ in local crossing time, altitude, phasing, and repeat cycle. The concentration shows up instead in orbital-element space. Once the mission requires a fixed local solar time, the design space narrows into a smaller family of near-polar planes and useful altitude bands than generic LEO would allow, and dawn-dusk missions narrow it further. That is why SSO behaves less like a single belt in the GEO sense and more like a preferred family of shells and planes that many operators want at once. In this paper’s own first-pass model, that points to roughly 550 to 650 kilometers. That is already a busy neighborhood. So the solar advantage carries a hidden trade: it makes the architecture more dependent on a smaller set of desirable shells. The system gains energy continuity by giving up orbital flexibility.

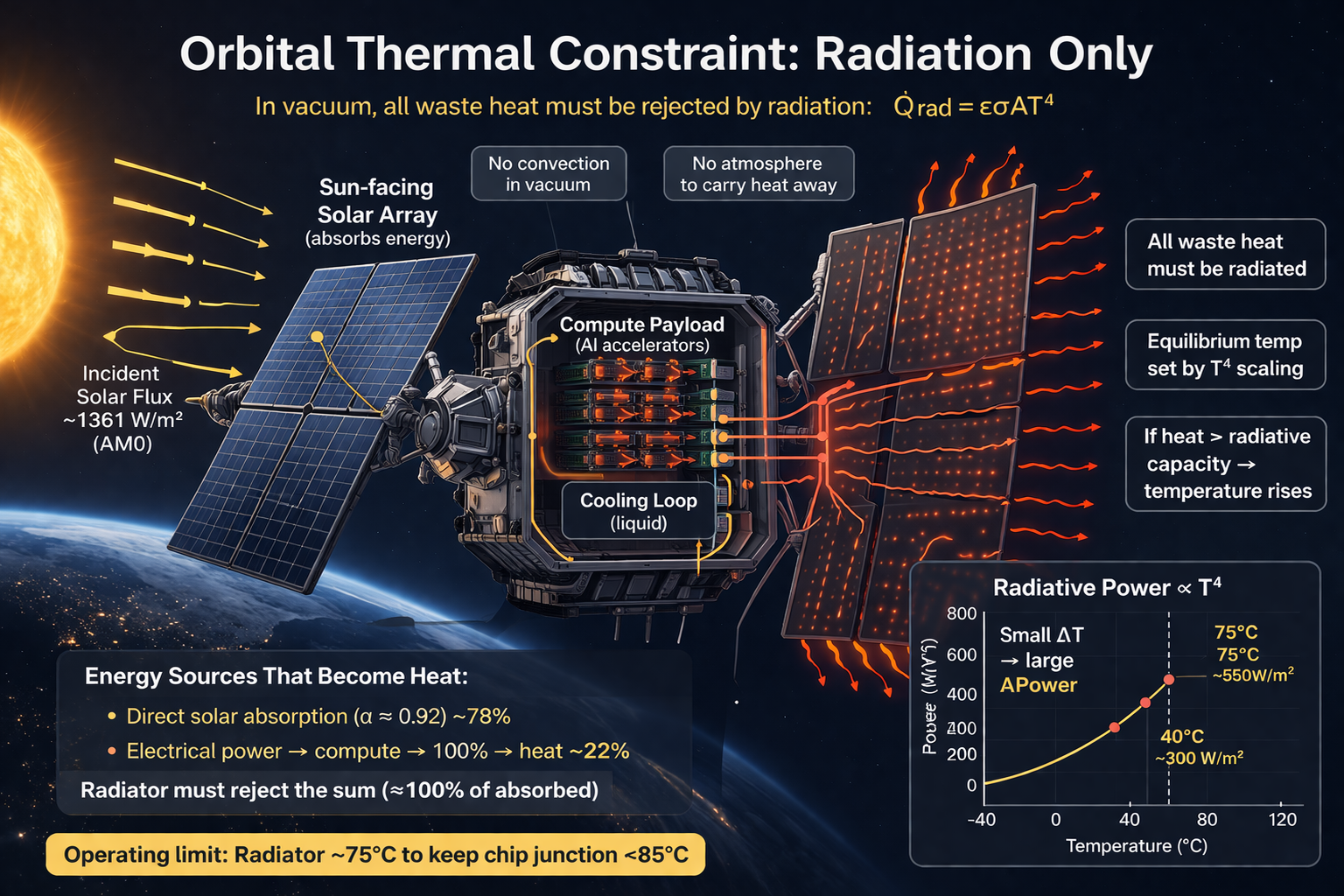

Then comes heat. A processor in orbit still turns most of its electrical input into waste heat. But the thermal burden does not begin and end with the IT load. Solar arrays absorb the irradiance that hits them. Some fraction is converted into electrical power and then largely ends up as heat once onboard systems use it. Much of the rest is absorbed directly as thermal load in the structure. The spacecraft therefore has to reject not just processor waste heat, but the broader absorbed energy budget of the vehicle.[7][10] On Earth that heat can be dumped through air systems, liquid loops, evaporative towers, heat exchange with ambient air, or district energy reuse. In space the final sink is radiation. NASA’s current thermal-control guidance expresses outgoing radiative heat as a function of radiator area, emissivity, and temperature relative to the sink.[10] In compact form the ceiling looks like this:

Q ≈ εσAT⁴

Take a radiator emissivity of 0.9 and a radiator temperature between 280 and 320 kelvin. A 39 kilowatt heat load then drives radiator area on the order of 70 to 130 square meters. Multiply that by a 160-node constellation sized to preserve 5 megawatts after 20 percent attrition and the fleet lands around 11,000 to 21,000 square meters of radiator area. If you want warmer radiators, the area falls but chip temperatures and thermal margins get harder. If you want cooler radiators, the area swells. There is no free exit from that trade.[10][11]

This is where the “space is cold” line breaks apart. Space is a good sink for radiation. It is a poor place to pretend conduction and convection still exist. Wired’s physics review cut through the trope with unusual clarity: large orbital compute systems need very large radiators, and the geometry becomes uglier as power climbs.[11] NASA’s SmallSat thermal guidance says the same thing in agency language. Active thermal control at high heat loads runs straight into power, mass, and volume penalties.[10]

All heat in orbit must be radiated away. That includes waste heat from compute and energy absorbed from sunlight, which together set the required radiator area and operating temperature.

The same pressure shows up in the public cost work. Andrew McCalip’s orbital-versus-terrestrial model, widely referenced by both IEEE Spectrum and TechCrunch, uses a 1 gigawatt electrical target over five years. Under his default “orbital solar” settings, the model lands around $31.2 billion for the space system versus $14.8 billion for the terrestrial baseline, with about 37,000 satellites, 22.2 million kilograms to LEO, and 2.3 square kilometers of fleet array area.[12] TechCrunch’s more narrative retelling of the same baseline rounds the space system up to about $42.4 billion, still close to a threefold premium relative to the terrestrial case.[13] IEEE Spectrum gives a similar headline number, describing a roughly $50 billion one-gigawatt orbital network over five years, again near three times terrestrial cost, and illustrates the scale with about 4,300 satellites at roughly 240 to 250 kilowatts each.[14]

Those models do a lot of work in the public debate because they are the first widely cited attempts to force the conversation into numbers rather than slogans. They still depend on optimistic assumptions: high sunlight fraction, aggressive launch economics, Starlink-like manufacturing logic, and bounded replacement overhead. Even under those friendlier assumptions, the space case still opens at a steep premium.[12][13][14]

Inter-Satellite Links Expand Capacity. Ground Gateways Still Limit It.

Modern orbital systems no longer depend on a single satellite waiting for its next ground pass. They increasingly behave as moving networks. Inter-satellite links, or ISLs, let traffic move across the constellation toward available gateways instead of sitting idle on one node until contact opens. Optical ISLs matter most here because they offer much higher throughput than older radio-frequency designs and make relay layers, path diversity, and tighter coordination between sensing, processing, and delivery far more plausible.[15] NASA treats that shift seriously enough to frame optical communications as a way around the bandwidth and antenna limits of conventional radio systems. TBIRD pushed the point into hardware, delivering 4.8 terabytes in five minutes at 200 gigabits per second from a 6U CubeSat to a ground station.[16]

Yet communications success fails to collapse the broader case into a win. The last hop to Earth still has to pass through real gateways, real licensing, real weather, and real coexistence rules. NASA’s own optical-communications overview lists clouds, mist, and atmospheric interference as first-order challenges and explains why multiple ground stations are required for continuity.[15] New America’s recent LEO spectrum work sharpens the policy side of the same point. Large constellations above the United States run through the Federal Communications Commission’s Part 25 licensing regime. Those applications can take anywhere from one to nearly four years. Each constellation application is individually reviewed, often carries system-specific conditions, and includes narrative, technical annexes, orbital debris plans, and International Telecommunication Union coordination material. Gateway siting can add another year and substantial cost in urban or suburban areas where low-latency backhaul is attractive.[17]

More bandwidth does not help if the network keeps getting stuck at the Earth crossing or in the queue behind it. Large constellations still need gateway approvals, spectrum coordination, backhaul, launches, ground stations, and replacement satellites. That is why New America treats vertical integration as a serious advantage in LEO. A company that controls more of those pieces can move faster and absorb more delays inside the system. A company that depends on outsiders for every critical step inherits more waiting, more coordination failures, and more ways for the whole network to stall.[17][18]

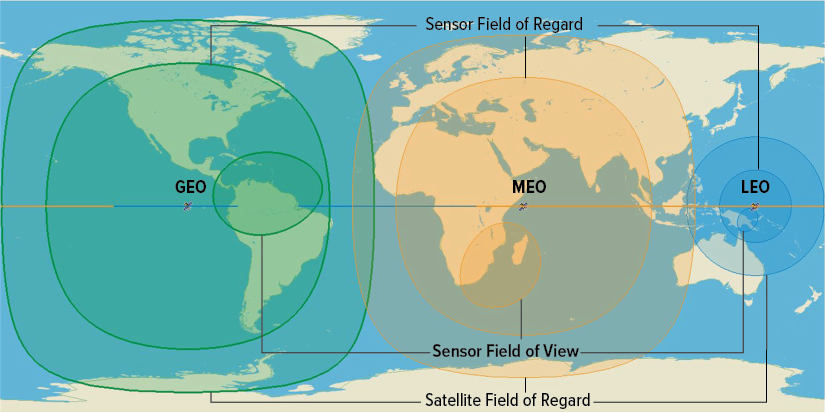

If the viewing angle must be at least 20 degrees above the horizon, the sensor’s field of regard (intermediate circles) is smaller than the satellite’s field of regard (outer circles). A camera with a very wide field of view might be able to view everywhere within its field of regard, but most sensors look at an area that is much smaller than their field of regard at any given moment (smallest oval).

Source: Congressional Budget Office/Large Constellations of Low-Altitude Satellites: A Primer

The Congressional Budget Office’s (CBO) large-constellation primer provides a clean geometric backdrop for this. A LEO satellite can view a given point on Earth for only about ten minutes. Comparable coverage across orbital regimes can look radically different in fleet size: one illustrative GEO constellation with four satellites, an MEO constellation with eight, and a LEO constellation with seventy-two can each provide broad coverage, yet the LEO system pays for that coverage with many more spacecraft and a much heavier replenishment rhythm. CBO also says LEO satellites are generally designed around five-year average lives, versus roughly ten years for MEO and fourteen for GEO.[19]

That is why communications help some orbital compute cases far more than others. Routed meshes and optical links are excellent for mission data, relay-adjacent compute, and some station-hosted or sovereign services. They do far less for the fantasy that Earth’s mainstream cloud can move upward just because the network is clever.

Where the case closes today

The strongest surviving branch is mission-linked processing. ESA’s public framing of space-based datacenters is built around the difficulty of downloading raw data from space and the value of processing in orbit before downlink.[3] That logic fits Earth observation especially well. A wildfire-monitoring spacecraft rarely needs every raw frame on the ground before any value appears. A perimeter estimate, hotspot classification, smoke motion vector, or change-detection mask often carries the operational value. In those cases compute near the sensor can lower the transmission bill and reduce response time.

A second credible branch is station-hosted compute. Axiom’s ISS roadmap is a sound example rather than a generic aspiration. The company says it has already deployed cloud computing capabilities on station, launched AxDCU-1 in 2025, and plans at least three interoperable ODC nodes by 2027, connected to satellites through optical terminals.[4] That architecture benefits from existing station infrastructure, hosted operations, and a customer base already living in orbit or sending assets there. It still faces radiation, replacement, and thermal design pressure. Even so, it is far easier to understand than a free-flying megawatt cloud region built for public AI inference.

A third branch is resilience storage. The Scientific Reports paper on O-RAID proposes orbital backup storage as a distributed redundant-array architecture for archives, disaster recovery, and long-term preservation, with technical readiness projected around 2035.[5] The paper overshoots in places, especially around “eliminates cooling costs entirely,” which clashes with standard spacecraft thermal physics. Even so, its core use case is far more legible than the broad cloud-substitution thesis. Cold backup and archival storage tolerate latency, accept scheduled ground contacts, and value geographic detachment in a way that mainstream production cloud rarely can.

Sovereign and defense demand forms another credible branch. The purchase is continuity and control under disruption. The Space Development Agency’s Tracking Layer is built for global missile warning, tracking, and targeting of advanced threats, including hypersonic missile systems, and explicitly describes algorithms, novel processing schemes, data fusion across sensors and orbital regimes, and tactical data products delivered to the user.[25] Its Transport Layer is an optically interconnected LEO constellation designed for assured, resilient, low-latency military data links, with lower latency treated as critical for time-sensitive targets.[26] In that setting the value is not bulk compute. It is the ability to fuse sensor data, maintain custody, and move targeting-quality products through a resilient network quickly enough to matter.

DARPA’s programs point in the same direction from a different angle. Blackjack explicitly aimed to develop payload and mission-level autonomy software and demonstrate autonomous orbital operations, including on-orbit distributed decision processors, inside a resilient LEO military network.[27] Oversight is even more direct about the operational reason: current practices that rely on human operators and individual ground-station workflows do not scale well, increase latency, and reduce tactical utility. The program is trying to enable autonomous constant custody of up to 1,000 targets from space assets in contested environments by coordinating satellite and ground resources.[28] That is a second defense rationale for orbital compute: not just moving data, but making enough decisions in or near the constellation that the system can keep tracking, prioritizing, and cueing when waiting on the ground becomes a liability.

In that setting, higher cost per watt can be acceptable when the system preserves access, shortens decision cycles, and keeps data within a defined operational boundary. Power, thermal, and replacement costs remain. The requirement is different.

Why broad terrestrial substitution still fails

The terrestrial case keeps improving while the orbital case keeps asking for stacked miracles. JLL projects global datacenter capacity to rise from 103 gigawatts to 200 gigawatts by 2030.[20] Google reports a 2024 fleet-average power usage effectiveness, or PUE, of 1.09 where 1.0 is theoretically perfect efficiency. AWS reports a 2024 global PUE of 1.15 and a best site at 1.04, plus a global water usage effectiveness of 0.15 liters per kilowatt-hour.[21][22] Uptime Institute says outage frequency and severity have declined for four straight years, even while power and network failures remain valid concerns.[23] None of that makes terrestrial infrastructure easy. It does make the comparison harder for orbit.

The gap between orbital proposals and terrestrial reality shows up in a small set of recurring constraints that compound.

The energy model depends on favorable orbital geometry. A dawn-dusk SSO with sunlight fraction near 0.98 is a best-case envelope where generation is steady and storage stays within reach. Outside that envelope the system changes quickly. In generic LEO, sunlight fraction drops, eclipse periods lengthen, and the design inherits an energy-storage problem that grows with load. Array area expands to compensate. Batteries grow to cover the longer gap. The same compute payload that looks merely difficult in one orbit starts looking bulky and punitive in another. The supposed power advantage in orbit therefore comes attached to a narrow set of favorable operating conditions rather than to LEO in general.

Heat rejection follows the same pattern. Radiative cooling works, but higher loads quickly drive radiator area, bus geometry, and pointing requirements. As load rises, radiator area becomes a design driver rather than a supporting subsystem. Tens of thousands of square meters of radiator area are enough to change bus geometry, pointing requirements, deployment logic, and total system mass. At that point the machine stops looking like a compute node with thermal support and starts looking like a radiator field that happens to carry processors. The engineering burden does not disappear because the vacuum is cold. It becomes visible in surface area, orientation, and structural overhead.[10][11]

Communications introduces a different kind of ceiling. Inter-satellite links improve routing and reduce dependence on individual passes, but they do not remove the need to exit through licensed ground infrastructure. Sustained service still depends on gateways, spectrum allocations, coordination rules, and atmospheric conditions at the final hop. Those are slower to expand than internal mesh capacity, and harder to duplicate cleanly. So internal throughput can grow faster than the system’s ability to turn that traffic into usable Earth service. A constellation can become better connected in space while still remaining bottlenecked at the ground crossing.[15][17]

Replacement cadence and shell occupancy become infrastructure problems in their own right. The more the architecture depends on favorable sun-synchronous bands, the less plausible it becomes to talk about orbital compute as though it were escaping congestion rather than redistributing it into a more selective set of shells. Between the demand for most desirable position and the debris dodging required to exist there, new constellations will have to face steep challenges. ESA’s 2025 space environment report says about 40,000 objects are currently tracked in orbit, about 11,000 of them active payloads. It estimates more than 1.2 million debris objects above one centimeter and more than 50,000 above ten centimeters. Within some heavily used LEO bands around 550 kilometers, the density of debris capable of causing damage is already on the same order as the density of active satellites. ESA also says 2024 added more than 3,000 tracked debris objects from fragmentation events and that even with zero new launches, debris would keep growing because fragmentation adds debris faster than natural reentry removes it.[24] That is the operational setting into which megascale compute fleets would be deployed.

Finally, the public economic case still depends on launch cost collapse plus manufacturing scale plus servicing maturity plus long-lived power-and-thermal structures. Google’s preprint is candid about this. Project Suncatcher is a moonshot. It says the system design depends on cheaper launch, near-continuous sunlight geometry, high-bandwidth optical links, radiation-tolerant hardware, and safe formation control at scale.[2][8] That is useful honesty. It is a very long way from “the grid is crowded, therefore AI training should move to orbit.”

When space becomes a place people belong to

There may come a time when the strongest argument for off-world compute has nothing to do with strain on Earth at all. By then many of the old objections will have been solved into routine. Launch will be ordinary. Traffic discipline will be real. Routes between worlds will be managed with the same seriousness that harbors and air corridors once demanded. Human beings will not be visiting space in brief and fragile intervals. They will be living there long enough for routine to appear.

Picture a large habitat turning slowly in the dark, its interior lit with the borrowed calm of morning. Along the curve of the cylinder, windows are opening. In a workshop near the axis, a fabricator is already awake, reading tolerances from a part that must fit on the first try because the ship waiting outside cannot afford another week in dock. Far below, under a different band of light, someone is tending a grove that exists because thousands of people intend to remain where they are. A child asks a question in one district, and an answer arrives from a library stored only a few kilometers away, not from a planet half a second distant and sometimes hidden behind weather, politics, or war.

In such a place, computation would simply be part of the habitat itself. It would live in the walls and under the decks and behind the sealed doors that most residents never think about, the way earlier cities forgot to marvel at pipes, substations, and archives once those things became reliable. The machines would be close because the life around them would be close. A habitat that governs its own air, repairs its own structures, teaches its own children, and keeps its own calendar would also keep its own memory. It would need somewhere to hold its designs, its records, its maps, its learned habits, and the countless quiet judgments that let a settlement pass from improvisation into permanence.

The same would be true beyond orbit. A town on the Moon would not want every meaningful decision to climb out of its gravity well, cross the void, and return with Earth’s delay folded into it. The settlement would want its own compute and control nearby. Distance changes the terms of dependence. A community becomes durable when it can remember for itself, model for itself, and act with enough confidence to meet the hour it is in. A community that can mine, heal, build, and endure under a foreign sky will eventually want more than a link home. It will want decision-making capacity that does not depend on the round trip to Earth.

As those places multiply, the harder task would be keeping them in working relation. A delayed cargo transfer from lunar orbit could shift a repair sequence at a shipyard, which could push a docking window at a habitat and force another settlement to stretch stores for an extra week. A radiation storm moving through cislunar space could scramble traffic priorities across several destinations at once. That kind of world would need more than radios and good will. It would need shared records that stay current, models that remain coherent across distance, and trusted systems for carrying schedules, decisions, and operational history from one place to the next without routing every dependency back through Earth.

In that setting, space-based datacenters become much easier to justify. They would grow alongside terrestrial infrastructure rather than in opposition to it. They would be part of the ordinary equipment of a species living in more than one place. Earth would still carry most of the computational weight for a very long time. It would still be denser, richer, and easier in almost every practical respect. But durable settlements create local memory, local coordination needs, and regional networks of dependence. Some computing would move outward for the same reason archives, ports, and hospitals appear wherever a place stops being temporary. Once people build lives that are meant to last, they also build the means to think, remember, and continue where they are.

Sources

[1] FCC Space Bureau, “Space Bureau Accepts for Filing SpaceX’s Application for Orbital Datacenters,” Feb. 4, 2026. https://docs.fcc.gov/public/attachments/DA-26-113A1.pdf

[2] Google, “Project Suncatcher explores powering AI in space,” Nov. 4, 2025. https://blog.google/innovation-and-ai/technology/research/google-project-suncatcher/

[3] ESA, “Knowledge beyond our planet: space-based datacenters,” Aug. 5, 2024. https://www.esa.int/Enabling_Support/Preparing_for_the_Future/Discovery_and_Preparation/Knowledge_beyond_our_planet_space-based_data_centers

[4] Axiom Space and SpaceBilt, “Axiom Space, Spacebilt Announce Orbital Datacenter Node Aboard International Space Station,” Sept. 16, 2025. https://www.axiomspace.com/release/axiom-space-spacebilt-announce-orbital-data-center-node

[5] R. G. N. Meegama, “O-RAID: a satellite constellation architecture for ultra-resilient global data backup,” Scientific Reports 16, 8062 (2026). https://www.nature.com/articles/s41598-026-38784-1

[6] NASA Small Spacecraft Systems Virtual Institute, “3.0 Power.” https://www.nasa.gov/smallsat-institute/sst-soa/power-subsystems/

[7] NASA Engineering and Safety Center, “The Need to Bake Out Silicone Based Thermal Control Coatings.” https://www.nasa.gov/centers-and-facilities/nesc/the-need-to-bake-out-silicone-based-thermal-control-coatings/

[8] Google, “Towards a future space-based, highly scalable AI infrastructure system design,” 2025. https://services.google.com/fh/files/misc/suncatcher_paper.pdf

[9] ESA, “Polar and Sun-synchronous orbit.” https://www.esa.int/ESA_Multimedia/Images/2020/03/Polar_and_Sun-synchronous_orbit

[10] NASA Small Spacecraft Systems Virtual Institute, “7.0 Thermal Control,” 2024 State-of-the-Art report. https://www.nasa.gov/smallsat-institute/sst-soa/thermal-control/

[11] Rhett Allain, “Could AI Datacenters Be Moved to Outer Space?” WIRED, Feb. 20, 2026. https://www.wired.com/story/could-we-put-ai-data-centers-in-space/

[12] Andrew McCalip, “Economics of Orbital vs Terrestrial Datacenters.” https://andrewmccalip.com/space-datacenters

[13] Tim Fernholz, “Why the economics of orbital AI are so brutal,” TechCrunch, Feb. 11, 2026. https://techcrunch.com/2026/02/11/why-the-economics-of-orbital-ai-are-so-brutal/

[14] Glenn Zorpette, “How Stupid Would It Be to Put Datacenters in Space?” IEEE Spectrum, Feb. 26, 2026. https://spectrum.ieee.org/orbital-data-centers

[15] NASA, “Optical Communications Overview,” Sept. 20, 2023. https://www.nasa.gov/technology/space-comms/optical-communications-overview/

[16] NASA, “NASA’s Record-Breaking Laser Demo Completes Mission,” Sept. 25, 2024. https://www.nasa.gov/directorates/somd/space-communications-navigation-program/nasas-record-breaking-laser-demo-completes-mission/

[17] New America, “Chapter I. Fueling Connectivity from Space: Spectrum Sharing and Coexistence,” 2025. https://www.newamerica.org/insights/leo-satellites/chapter-i-fueling-connectivity-from-space-spectrum-sharing-and-coexistence/

[18] New America, “Chapter II. The Final Economic Frontier: Satellite Competition in Low Earth Orbit,” 2025. https://www.newamerica.org/insights/leo-satellites/chapter-ii-the-final-economic-frontier-satellite-competition-in-low-earth-orbit/

[19] Congressional Budget Office, Large Constellations of Low-Altitude Satellites: A Primer, May 17, 2023. https://www.cbo.gov/system/files/2023-05/58794-satellite-primer.pdf

[20] JLL, “Global datacenter sector to nearly double to 200GW amid AI infrastructure boom,” Jan. 6, 2026. https://www.jll.com/en-us/newsroom/global-data-center-sector-to-nearly-double-to-200gw-amid-ai-infrastructure-boom

[21] Google Datacenters, “Power usage effectiveness.” https://datacenters.google/efficiency/

[22] Amazon Sustainability, “AWS Cloud.” https://sustainability.aboutamazon.com/environment/the-cloud/asdi

[23] Uptime Institute, “Annual Outage Analysis 2025.” https://uptimeinstitute.com/about-ui/press-releases/uptime-announces-annual-outage-analysis-report-2025

[24] ESA, “ESA Space Environment Report 2025,” Apr. 1, 2025. https://www.esa.int/Space_Safety/Space_Debris/ESA_Space_Environment_Report_2025

[25] Space Development Agency, “Tracking.” https://www.sda.mil/tracking/

[26] Space Development Agency, “Transport.” https://www.sda.mil/transport/

[27] DARPA, “Blackjack.” https://www.darpa.mil/research/programs/blackjack

[28] DARPA, “Oversight.” https://www.darpa.mil/research/programs/oversight