Datacenters in Space

A research project examining whether orbital data centers are a viable future architecture or a seductive misreading of physics. Testing the idea against power, cooling, networking, cost, workload fit, and long-horizon strategic rationale.

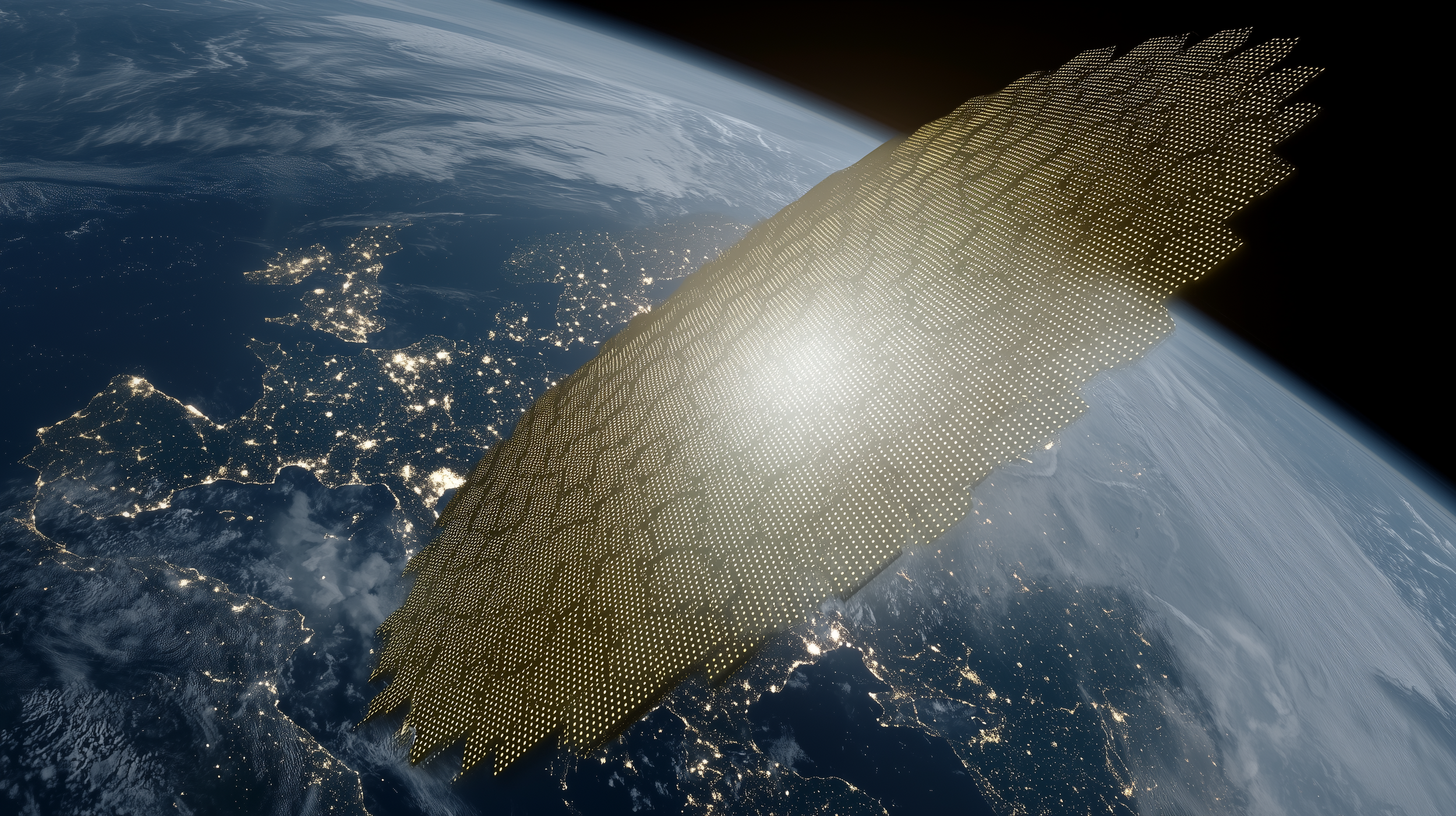

Artistic concept of a constellation of datacenter satellites equipped with optical mesh communications.

By Deconstruct.com

I began this project in response to growing interest in putting data centers into orbit. The idea is intriguing, and to some it may even seem inevitable, but it carries a dense set of physical, economic, and operational constraints. I started by grounding the topic in current proposals and available evidence, then pushed further by exploring whether better architectures could design some of those constraints away.

Most of the technical details behind real spacecraft, orbital infrastructure, and mega-constellations remain proprietary. To work around that, this project combines public reporting, NASA and ESA guidance, industry signals, and explicit architectural inference.

Taken seriously, the concept narrows fast: orbital compute becomes most credible as a distributed layer for space-native processing and network-adjacent services, not as a general-purpose replacement for terrestrial cloud infrastructure.

The full research lineage is available below, including the main paper, appendices, intermediate framing documents, and the prompt used to generate part of the analysis. Jump to the papers below.

People have been imagining large engineered systems in orbit for decades. Long before current filings for orbital data-center constellations and solar-powered compute satellites, writers and engineers were already drawn to the same basic intuition: above the atmosphere, sunlight is strong, line of sight is broad, and infrastructure is freed from some of the siting constraints that shape the ground.

But the modern version of the idea inherits a far more concrete bill than the older vision ever had to carry. Power generation, eclipse losses, storage mass, heat rejection, launch cadence, replacement, communications, debris exposure, and governance all sit inside the design boundary. Once those constraints are made explicit, “orbital compute” stops looking like one futuristic category and starts breaking into separate claims with different buyers, different architectures, and different burdens of proof.

Our essay series is an attempt to sort those claims cleanly. The first essay asks the headline question: when does orbital compute make sense, and for whom? The second looks further out and asks what would have to change, across launch, servicing, assembly, autonomy, and governance, for a more mature orbital compute regime to become plausible at all. The third turns to debris, churn, and the orbital commons, and asks whether some scaling paths degrade the environment they depend on. A final essay cashes out the machinery and shows what a real orbital compute architecture would actually have to look like.

Start Here

There are three ways to explore orbital compute and the broader “datacenters in space” question.

If you want the clearest entry point, start with the essays. They distill the research into a more readable argument.

If you want the full research path, including appendices, intermediate models, and supporting analysis, use the archive below.

If you want to move through the material more visually and build intuition as you go, keep scrolling.

Essay Series

These essays distill the research into a more readable argument. Start here if you want the synthesized version.

Research Paper Archive

These documents show the full research progression, appendices, and supporting analysis.

The full research lineage is available below, including the main paper, appendices, intermediate framing documents, and the prompt used to generate part of the analysis. Jump to the papers below.

Further Reading: CBO’s Satellite Constellation Primer

This illustration represents a three-dimensional depiction of the viewing area (field of regard) of satellites in LEO (1,000 km), MEO (18,000 km), and GEO (35,786 km). The coverage area represented here takes into account the Earth’s geometry but not other variables, such as any sensor-related limitations on viewing angle.

If the viewing angle must be at least 20 degrees above the horizon, the sensor’s field of regard (intermediate circles) is smaller than the satellite’s field of regard (outer circles). A camera with a very wide field of view might be able to view everywhere within its field of regard, but most sensors look at an area that is much smaller than their field of regard at any given moment (smallest oval).

The Congressional Budget Office’s Large Constellations of Low-Altitude Satellites: A Primer is a useful reference for readers who want the basics of orbital regimes, coverage, constellation design, debris, and lifecycle cost in one place. CBO prepared the report for the Senate Armed Services Committee as objective, nonpartisan analysis, which makes it a better source for fundamentals than most promotional or speculative material in this area. Rather than recreate that groundwork here, I recommend using it as a companion reference for the diagrams and baseline mechanics behind the discussion. We pull out several useful report excerpts here.

Satellites in lower altitudes communicate with users on the ground more quickly but require more satellites in the chain. The distance between each satellite is shorter for lower altitude orbits, however. Overall, the total transit time for LEO is about half that of MEO and a quarter that of GEO.

A constellation of 72 satellites, separated into 6 planes with 12 satellites in each plane (left panel), provides almost complete global coverage. In the right panel, blue cones show the field of regard of the satellite sensors. Some small gaps between sensors’ coverage exist, primarily in the equatorial region. All 72 satellites are orbiting at an 80 degree inclination.

Orbital Environment

Space-based compute would not enter a blank environment. It would be deployed into orbital shells with different debris burdens, radiation conditions, and failure consequences.

“For orbital data centers, the tempting argument is that higher orbits offer stable thermal and power conditions and lower drag, but that “benefit” is shadowed by vastly extended debris persistence times and governance externalities.”

If we imagine moving a data center into orbit, literally, the first problem to solve is power generation. A terrestrial facility can lean on the grid, on substations, on diesel backup, on utility planning, and on a cooling plant that sits on the same site. An orbital system has no such inheritance. It carries its own power plant, its own storage, its own heat rejection hardware, its own communications layer, its own propulsion, and its own end-of-life plan. The exercise stops being about racks very quickly. It becomes a problem in orbital infrastructure.

What the physical models look like

Click to Expand Images

Design Progression

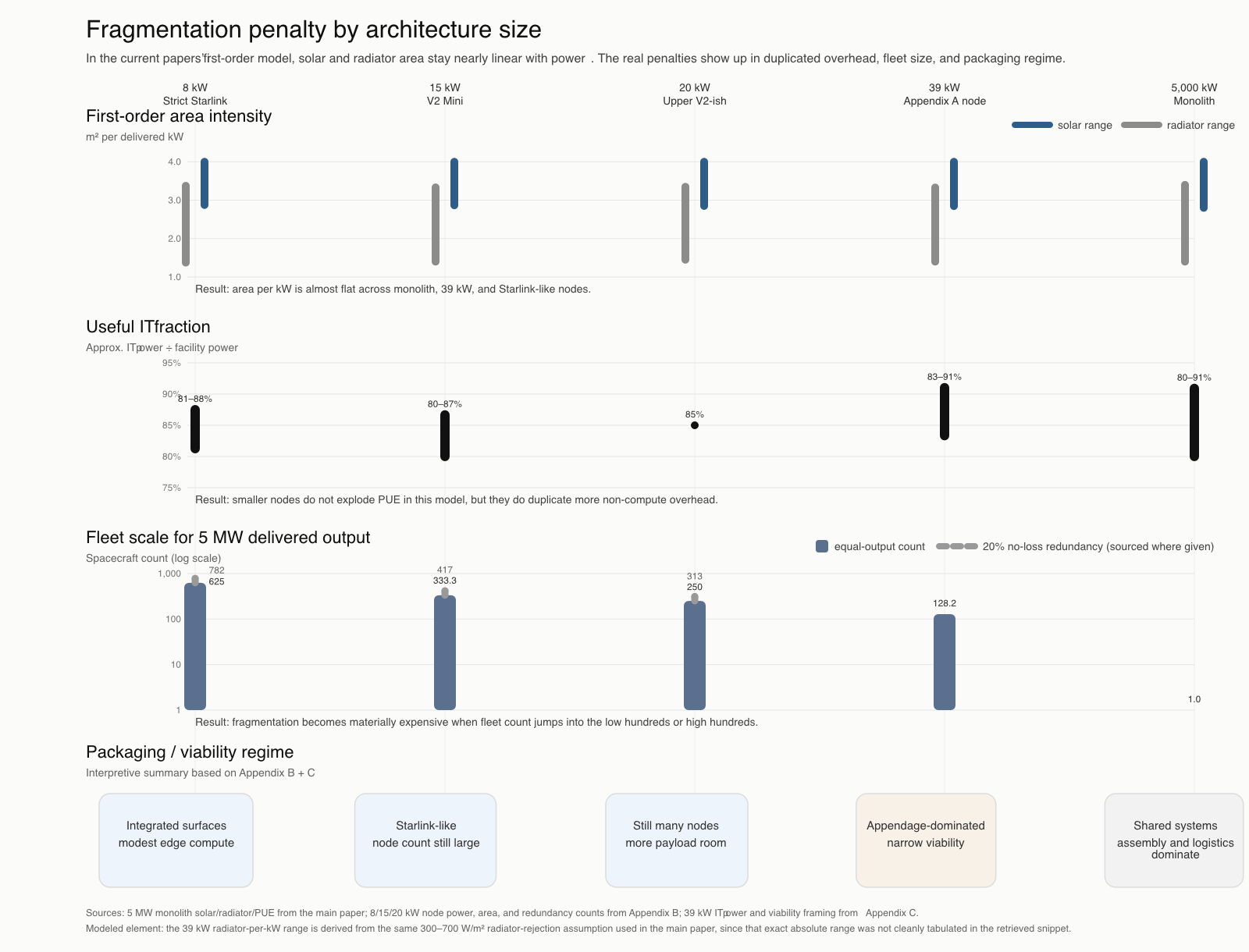

The project’s architecture evolved by removing assumptions that only make sense on Earth. As each terrestrial convenience dropped away, the design shifted toward smaller nodes, stronger networking assumptions, and narrower workload ambitions.

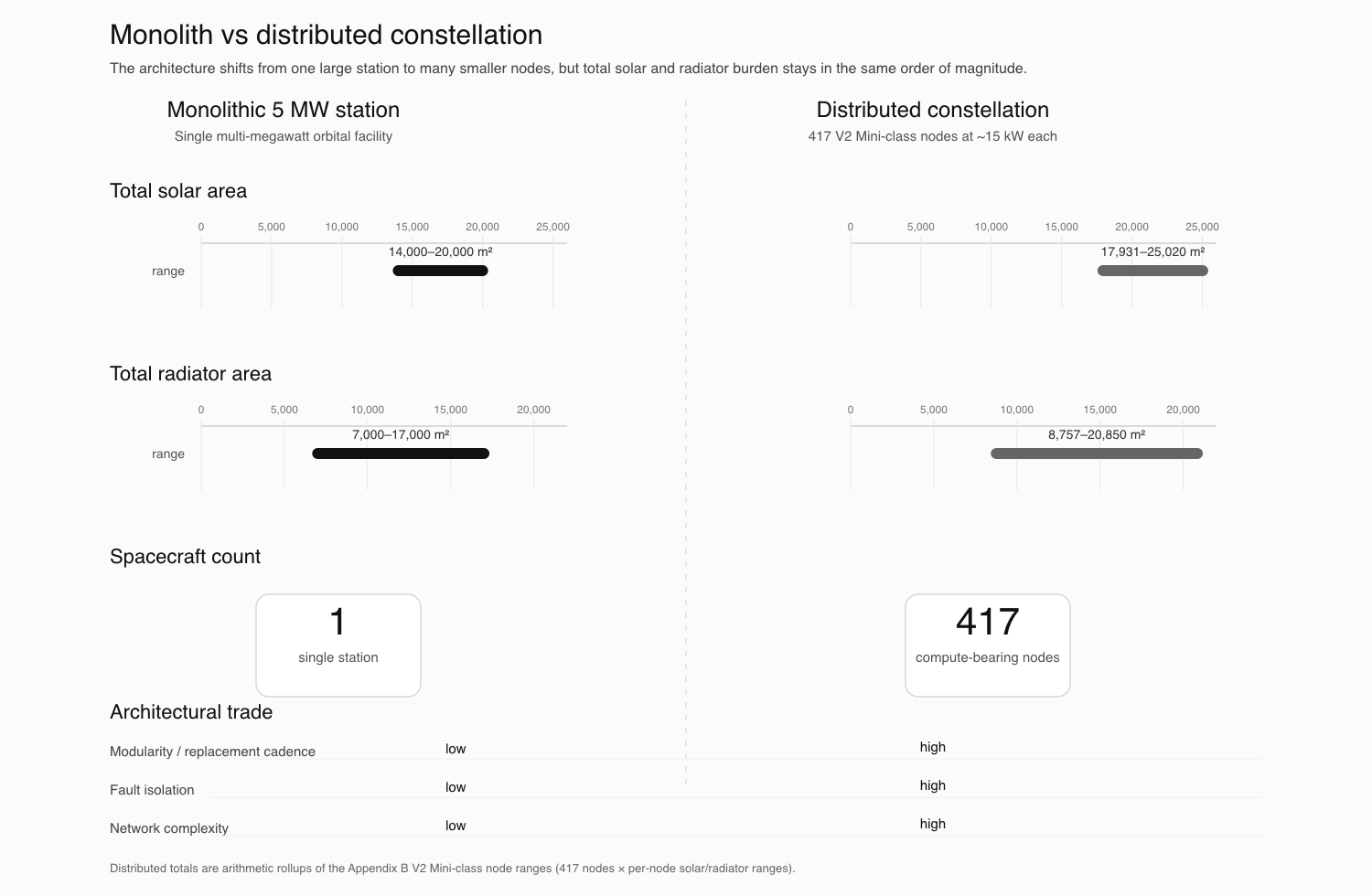

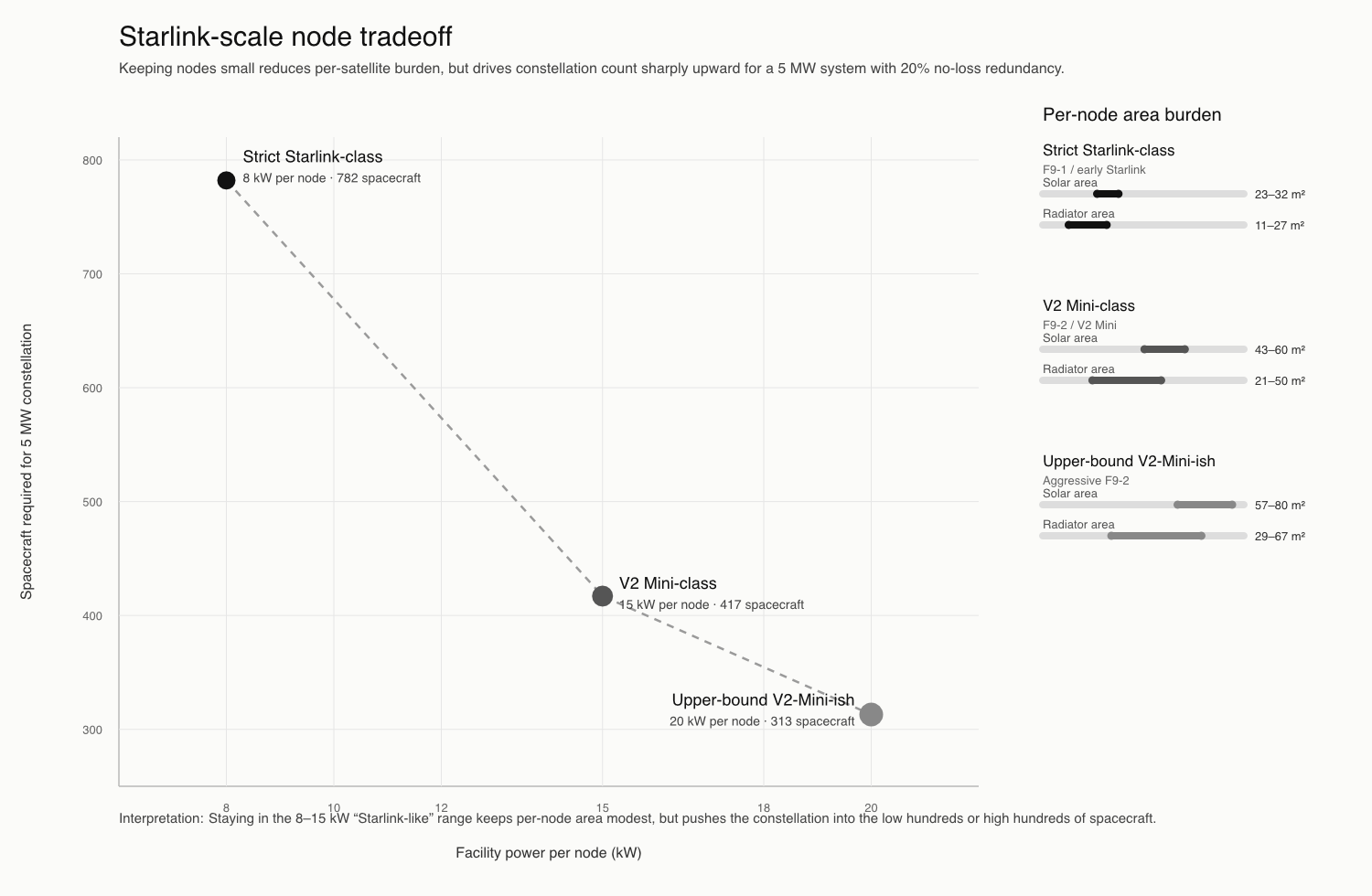

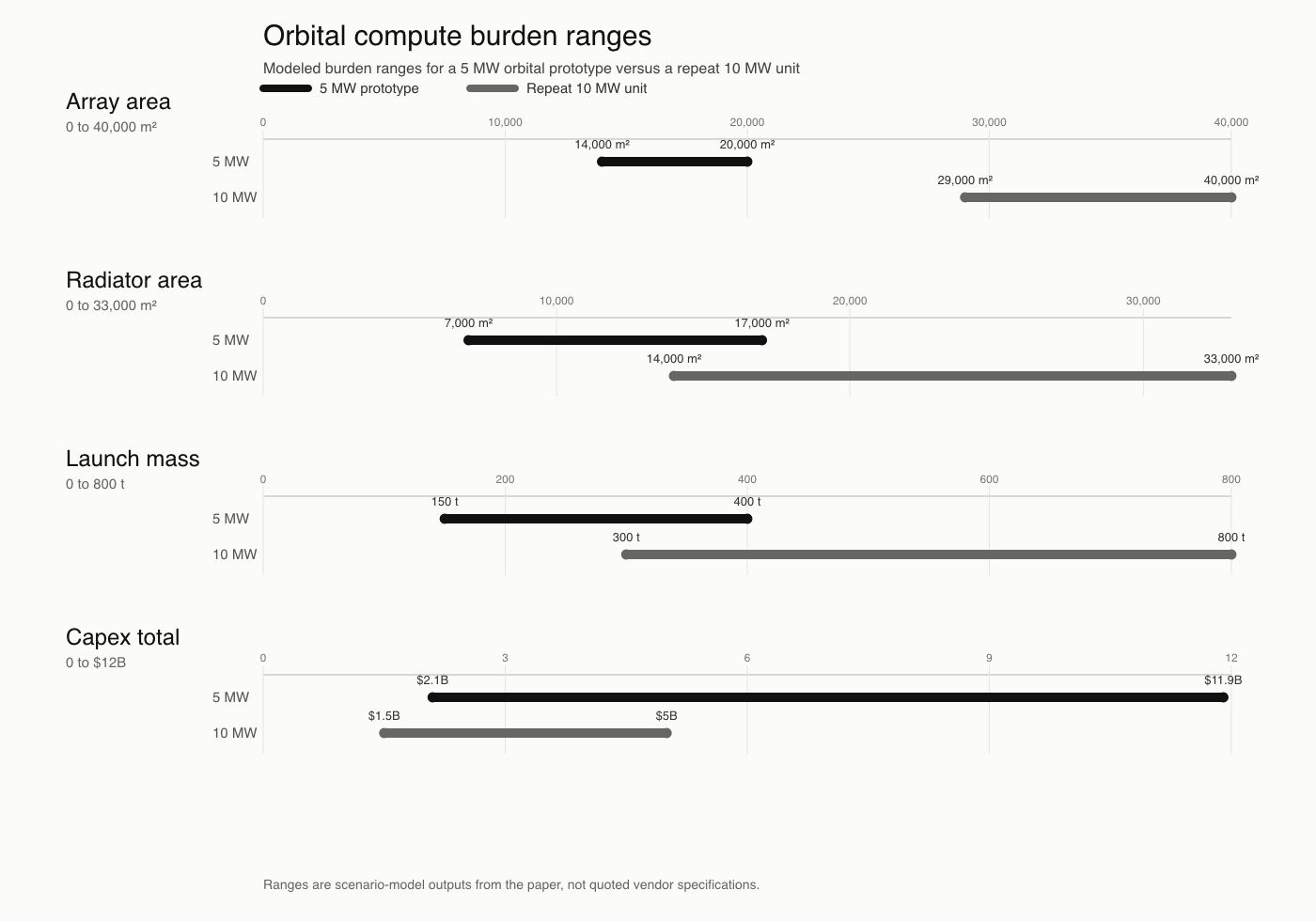

The power requirements of a single 5 MW orbital facility are immense, and quickly expose the limitations of a monolithic design. Solar arrays alone scale to thousands of square meters, and when paired with radiator area for heat rejection at a similar order of magnitude, the result is a single large, exposed structure that is difficult to justify operationally.

A single large orbital facility is difficult to justify. It is exposed, hard to assemble, difficult to cool, and expensive to deorbit responsibly.

Distributed nodes are the first architectural correction. A constellation does not eliminate the burden, but it aligns better with launch, redundancy, and failure isolation.

Once distributed, the network becomes part of the compute model. Inter-satellite links, ground visibility, relay layers, latency tolerance, and radiation tradeoffs all start shaping what the system can actually do.

Kessler Syndrome Is a Material System Risk

The debris environment is no longer an abstract policy issue. ESA’s 2025 Space Environment Report describes low Earth orbit as increasingly crowded, notes that around 550 km there is now the same order of magnitude of threatening debris as active satellites, and reports that 2024 added at least 3,000 tracked debris objects through major and smaller fragmentation events.

ESA also makes the uncomfortable point that even without new launches, catastrophic collisions can continue to rise because fragmentation adds debris faster than natural reentry removes it. A large compute structure inserted into those bands has to be evaluated against that reality, not against a conceptual blank sky.

This visualization depicts objects in orbit around Earth as of February 2024. It begins with approximately 31,000 orange dots, each representing a trackable object in the publicly available database. Green dots then fade in, highlighting around 9,300 active (currently operational) satellites. By NASA Scientific Visualization Studio

Check out more visualizations, including current Starlink positions and leftover debris with more than 500 objects from a 2009 collision. [NASA]

“The Kessler effect is a runaway chain reaction of collisions producing more debris, also called collisional cascading.”

Read our ChatGPT assisted research paper: “Kessler Syndrome and Orbital Data Center Architectures: Cascade Plausibility, Failure Modes, and Prevention” for a detailed breakdown of the problem, why it matters, and what we are already doing about it.

Timelapse photograph of Starlink satellites overhead. By M. Lewinsky/Creative Commons Attribution 2.0 - https://noirlab.edu/public/images/ann22005a/, CC BY 4.0, https://commons.wikimedia.org/w/index.php?curid=128247445

Why orbital compute becomes a systems problem

Low Earth Orbit (LEO) satellites can opportunistically communicate with ground stations when in range - and when bandwidth allows.

Peer-to-peer optical communication between satellites allows multi-hop routing to the best available ground station.

For workloads which tolerate longer latency such as control plane tasks, large volume data transfers, or opportunistic compute workloads, the Mid Earth Orbit (MEO) path offers higher bandwidth, and longer visibility windows per ground station.

One place this shows up immediately is networking

State of the field

This timeline pulls together the most relevant demonstrations, studies, launches, filings, and announced systems behind the topic. Its purpose is not to imply maturity. It is to show where orbital compute has moved from experiment into real institutional and commercial attention, and where the rhetoric still runs ahead of the engineering.

Research Paper Archive

If you prefer a more distilled, synthesized, user-friendly format, consider starting with our essay series.

Essays

Research Papers

| Document Title | Document Process Stage | Summary |

|---|---|---|

| Main Paper | Core paper | Baseline feasibility analysis of orbital data centers, concluding the concept is strongest as a niche space-native compute model and weak as a mainstream cloud architecture. |

| Appendix A | Architecture appendix | Recasts the original monolithic concept into distributed orbital compute nodes and tests whether a constellation materially changes the result. |

| Appendix B | Scale appendix | Constrains the concept against Starlink-scale spacecraft realities and argues for a much smaller, more credible node size. |

| Appendix C | Research expansion | Extends the analysis with corrected networking assumptions, layered LEO/MEO architecture, SoC-native compute framing, and long-horizon workload fit. |

| Final Paper (v2) | Synthesis of all papers combined | Integrated synthesis of all prior analyses, refining the architecture, correcting assumptions, and concluding that orbital data centers are viable only within narrowly defined, space-native compute niches rather than as a general-purpose cloud replacement. |

| Appendix C - Research Framing Memo | Research framing | Foundational memo establishing research direction, assumptions, and analytical scope for the Appendix C expansion. |

| Appendix C - Deep Research Prompt | Prompt artifact | Full structured prompt used to generate deeper analysis, included for methodological transparency. |

| Kessler Syndrome, Orbital Debris, and the Orbital Data Center Trap | Risk analysis | Explores cascading orbital debris risks and how large-scale compute constellations intersect with Kessler syndrome dynamics. |